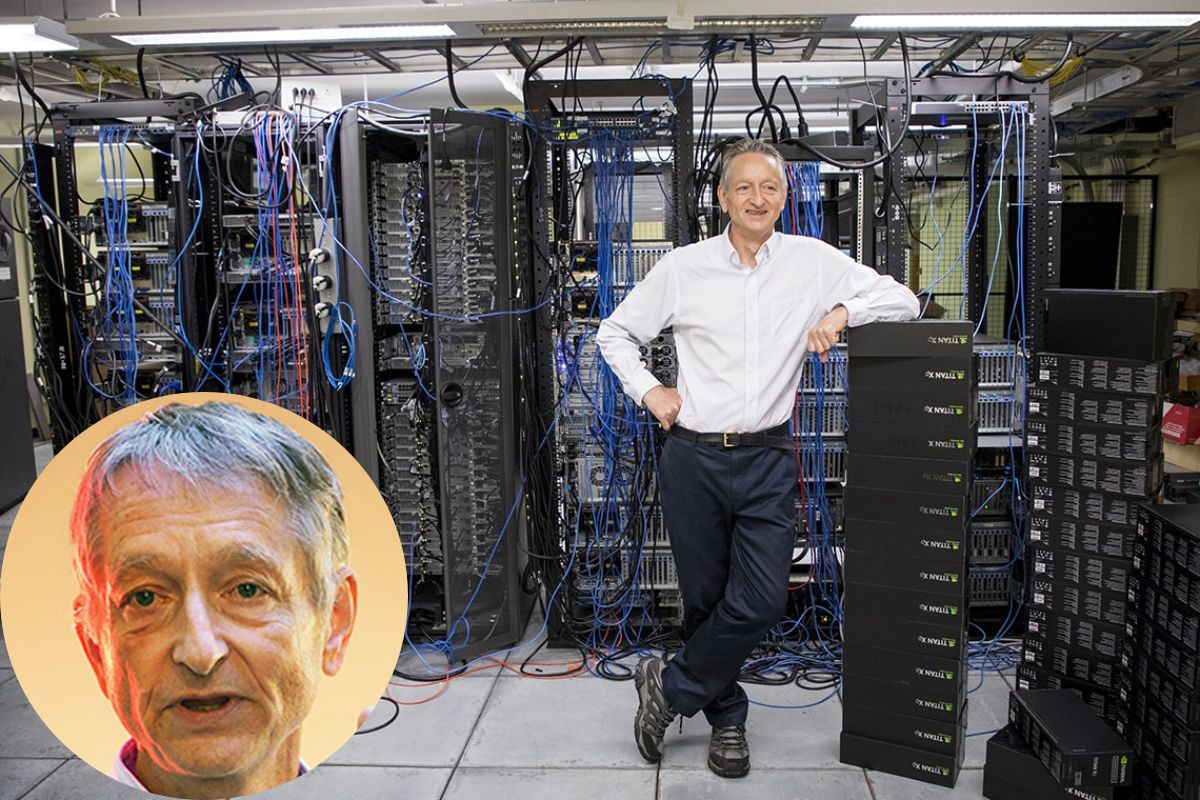

Geoffrey Hinton, a British-Canadian computer scientist and cognitive psychologist who is also known as the “Godfather of Deep Learning,” has made ground-breaking contributions to the study of artificial intelligence. Hinton is well recognized for his work on neural networks, including the discovery of the backpropagation algorithm and the first effective deep learning model, referred to as the “Deep Learning Network.”

Geoffrey Hinton’s Early Life

Hinton got his Bachelor of Psychology degree from the University of Edinburgh in 1969. He was born in London, England, in 1947. Later, in 1978, he graduated with a Ph.D. in artificial intelligence from the University of Sussex. Hinton worked as a researcher at the University of Sussex for a while following the completion of his doctorate.

Geoffrey Hinton’s Career

In 1978, he graduated with a doctorate in artificial intelligence from the University of Sussex. After that, he worked at the University of Sussex before moving to the University of California in San Diego, CA, in the United States, where he also worked at Carnegie Mellon University, because it was difficult for him to secure funding in Britain.

Check out these links for more celebrity and company net worth:

- Jeff Shell Net Worth: How Rich Is He Now in 2023?

- Granger Smith Net Worth: How Rich is He Now in 2023?

He was the University College London’s Gatsby Charitable Foundation Computational Neuroscience Unit’s first director. To use the backpropagation technique with multi-layer neural networks, Hinton collaborated with David E. Rumelhart and Ronald J. Williams while he was a professor at Carnegie Mellon University (1982–1987).

Together with David Ackley and Terry Sejnowski, Hinton co-invented Boltzmann machines around the same time. Furthermore, he made contributions to the study of neural networks using distributed representations, time-delay neural networks, expert mixtures, Helmholtz machines, and products of experts. Each of these contributed to the development of the sector and the ongoing research into neural networks and deep learning.

Additionally, he is a co-inventor of the well-known “AlexNet” deep learning model, which won the 2012 ImageNet Large Scale Visual Recognition Challenge and transformed the computer vision industry. Alex Krizhevsky, Geoffrey Hinton, and their colleagues at the University of Toronto developed the deep learning model known as AlexNet.

The ImageNet Large Scale Visual Recognition Challenge (ILSVRC) competition featured the first-ever deep neural network, AlexNet, which took first place in 2012 with an incredible 85% accuracy rate. AlexNet is recognized for starting the Deep Learning Revolution and for playing a big role in popularizing deep learning.

It broke records for both accuracy and training time, training 60 million parameters on two GPUs in less than half a day. Since the foundation of AlexNet is supervised learning, the model is pre-trained to recognize specific objects and patterns in the images that it feeds into the input layer during training. Since its inception, organizations all around the world have made extensive use of AlexNet to identify certain things in databases, including people, animals, plants, and more.

Hinton maintains a humble demeanor and a commitment to his profession despite his many accomplishments. He is still conducting research and is constantly seeking out fresh approaches to increase artificial intelligence and deepen our understanding of the human brain. He is a researcher at Google Brain and the University of Toronto at the moment.

He presently serves as an advisor for the Canadian Institute for Advanced Research’s Learning in Machines & Brains program and holds a Canada Research Chair in Machine Learning. His study focuses on neural networks and how they make use of symbol processing, memory, perception, and machine learning. Over the years, he has written or collaborated on more than 200 peer-reviewed articles in his area of expertise.

Hinton unveiled a novel neural network learning algorithm at the Conference on Neural Information Processing Systems (NeuRIPS) 2022. The “Forward-Forward” algorithm is what he refers to. It aims to substitute two forward passes, one with positive (i.e., genuine) data and the other with negative data that could be produced by the network itself, for the conventional forward-backward passes of backpropagation.

This will make a significant impact on the sector and how information is handled in programs in the future. Hinton has made important contributions to the field of cognitive psychology in addition to his work on artificial neural networks and deep learning. His study has helped to clarify how the human brain receives and stores information. He has studied a variety of subjects, including perception, attention, and memory.

Geoffrey Hinton’s Net Worth

Although Geoffrey’s net worth is not currently known, it has been guessed to be between 5 and 10 million dollars.

Geoffrey Hinton’s Achievements

In 2012, Hinton was awarded the Queen Elizabeth II Diamond Jubilee Medal, one of several honors for his service. In addition, Hinton has won numerous honors for his contributions to cognitive psychology and artificial intelligence.

He received the Turing Award, known as the “Nobel Prize” in computer science, in 2018. In addition, he is a Fellow of the Association for Computational Linguistics, the Royal Society, and the Royal Society of Canada.

Hinton’s work on artificial neural networks, which are intended to imitate the functioning of the human brain, is perhaps best recognized for them. He was one of the pioneering scientists to investigate the use of backpropagation as a technique for neural network training, and the results of his study have been crucial to the advancement of deep learning algorithms.

The backpropagation method, which is currently a key tool in the study of artificial neural networks, was created by Hinton and his colleagues in 1986. By employing gradient descent to modify the weights and biases of the network, this approach enables effective training of neural networks.

Natural language processing has significantly advanced as a result of Hinton’s work on neural networks. He created the well-known Word2vec technique with his team, which is used to produce word embeddings for tasks involving natural language processing.

If you want to check more net worth of other companies and celebrities, then you can check the links given below:

- Elliot Grainge Net Worth: How Rich Is He Now in 2023?

- Eric Benet Net Worth: How Rich is He Now in 2023?

When Hinton and his team at the University of Toronto created a deep learning algorithm that was able to reach record-breaking accuracy on the ImageNet dataset in 2012, they made a significant advancement in the field of image recognition. This accomplishment was notable because it showed how deep learning algorithms may be used to solve challenging problems and created new opportunities for their use in a range of applications.

Stay connected with us on our Tumblr handle.